Table of Contents

Table of Contents

Are you familiar with the term "packet loss"? No, it's not when your postman loses your package, but it's when data packets go missing in the vast world of the Internet. And if you think losing just a few packets won't affect your internet speed, think again!

Packet loss is a common occurrence in networked applications, and its impact on performance can vary depending on the application and network conditions. In cloud backup applications, even small levels of packet loss can have a significant impact on transfer time and throughput.

In this blog post, we'll be diving into the world of acceptable packet loss and how it can impact your network performance. Spoiler alert: 10% packet loss can make your network 100x slower! We’ll also explore the impact of packet loss on cloud backup performance, with real-world scenarios demonstrating the relationship between packet loss, transfer time, and throughput.

Packet loss occurs when data packets fail to reach their intended destination in a network. Packet loss causes can include various factors, such as network congestion, equipment failure, and transmission errors. While some level of packet loss is inevitable in any network, maintaining a low packet loss rate is essential for ensuring reliable performance in networked applications.

The acceptable packet loss rate can vary depending on the type of network and the specific application or use case. In general, a packet loss rate of less than 1% or 0.1% is considered to be acceptable for most applications.

However, some applications such as online gaming, real-time communication, or VoIP may require even lower packet loss rates to ensure smooth and uninterrupted performance.

For example, a packet loss rate of 0.5% or lower is generally recommended for online gaming, while a packet loss rate of less than 1% is typically acceptable for VoIP. Ultimately, the acceptable packet loss rate will depend on the specific requirements and constraints of the network and the applications running on it.

While file transfer, such as cloud backups, is generally more tolerant of packet loss than other applications, there are several applications that are more sensitive to packet loss.

Real-time applications such as video streaming and VoIP require low VoIP latency and a high level of reliability, making them more sensitive to packet loss. When packet loss occurs, it can cause significant issues for these applications.

In video streaming, even a small level of packet loss can result in buffering, pixelation or stuttering of the video, making it unwatchable and affecting the user's viewing experience. Every lost packet has a direct impact on the quality of the user experience.

For example, a 1% packet loss rate in video streaming may cause occasional buffering, but a 5% packet loss rate can make the video unwatchable.

Like video streaming, VoIP apps are also more sensitive to packet loss than other types of applications. In VoIP, packet loss can cause choppy voice and dropped calls, distortion, and even complete loss of audio, making it impossible to communicate effectively and affecting user experience and business reputation.

Even a 1% packet loss rate in VoIP may cause occasional dropouts, but a 5% packet loss rate can result in a complete loss of communication. When this happens, it can greatly affect user experience satisfaction when facing customers and partners. This is why VoIP monitoring and measuring packet loss are especially important.

For file transfers and other non-real-time applications, packet loss is generally less critical. Acceptable packet loss rates can vary depending on the size of the transfer and the user's tolerance for delays or disruptions. However, in general, a packet loss rate of less than 5% is considered acceptable for most file transfer applications.

A high packet loss rate occurs when a significant percentage of packets transmitted across a network fail to reach their destination. While there is no universal threshold for what constitutes a high packet loss rate, in general, any packet loss rate higher than 5% is considered to be relatively high and may indicate a problem with the network.

In some cases, a high packet loss rate may be due to issues such as network congestion, network overload, bandwidth limitations, or problems with network infrastructure. High packet loss rates can cause significant performance degradation in network applications, resulting in slow or unresponsive connections, dropped calls or data corruption.

Are you tired of high packet loss rates impacting your network and user experience? Monitor and detect packet loss anywhere in your network with Obkio's powerful Network Performance Monitoring tool. Start with Obkio's Free Trial today!

Don't let high packet loss hold you back - sign up for Obkio's network monitoring solution now!

- 14-day free trial of all premium features

- Deploy in just 10 minutes

- Monitor performance in all key network locations

- Measure real-time network metrics like high and acceptable pakcet loss

- Identify and troubleshoot live network problems

Packet loss, both high and acceptable packet loss, can have a significant impact on your Internet experience. When data packets are lost, it can result in slower Internet speeds, connection interruptions, and even complete data loss.

High packet loss, typically above 5%, can cause serious disruptions to your Internet connectivity. At this level, you may experience sluggish page loads, choppy video and audio playback, and delays in online gaming. In some cases, high packet loss can even lead to complete Internet or network disconnection.

On the other hand, acceptable packet loss, typically below 1%, may not be noticeable in your Internet usage. However, even small levels of packet loss can result in increased latency, which can make your internet feel slower and less responsive.

Packet loss can affect different applications in different ways, depending on the nature of the application and the amount of packet loss experienced. Here are some common examples:

- Cloud Backup Apps: Cloud backup applications are becoming increasingly popular for data protection and disaster recovery. These applications allow users to back up their data to a remote server over the internet, ensuring that their data is safe and secure. However, packet loss can have a significant impact on the performance of cloud backups, affecting transfer time and throughput.

- Web Browsing: Even a small amount of packet loss can result in slow loading times for web pages. This is because web browsers need to request and receive multiple packets of data to display a single page, and any lost packets can slow down the process.

- Video and Audio Streaming: High packet loss can cause buffering, stuttering, and other interruptions for audio and video applications like Zoom and Microsoft Teams. This is because streaming services rely on a constant stream of data packets to deliver a smooth viewing experience, and any lost packets can disrupt the stream.

- VoIP (Voice over Internet Protocol): VoIP services like Microsoft Teams and Zoom rely on a constant stream of data packets to deliver clear and uninterrupted audio. High packet loss can result in poor VoIP quality and call quality, dropped calls, and difficulty communicating with others.

The acceptable packet loss rate can vary depending on the specific application and network conditions. Do you know what factors can impact the acceptable packet loss rate for cloud backup applications? There are three key factors that can determine whether your data is backed up safely or at risk of being lost:

- Size of the backup: The size of the backup can determine how long it takes to upload or download the data to and from the cloud. If the backup is small, the user may be able to tolerate a higher packet loss rate because it won't significantly impact the overall backup time. However, if the backup is large, even a small packet loss rate can result in significant delays and potentially disrupt the backup process. Therefore, a small backup may be less sensitive to packet loss than a large backup, as the transfer time for a small backup is generally shorter.

- Bandwidth of the network connection: The bandwidth of the network connection can determine how quickly the backup data can be transmitted to and from the cloud. If the network connection has a high bandwidth, the user may be able to tolerate a higher packet loss rate because the data can be transmitted quickly even with some packet loss. However, if the network connection has a low bandwidth, a higher packet loss rate can result in significant delays and potentially disrupt the backup process. Therefore, a high-bandwidth network connection may be more tolerant of packet loss than a low-bandwidth connection, as it can compensate for the loss of packets by sending more packets per unit of time.

- User's tolerance for disruption: The user's tolerance for disruption can also impact the acceptable packet loss rate. If the user is willing to tolerate some disruptions or delays in the backup process, they may be able to tolerate a higher packet loss rate. However, if the user requires a seamless and uninterrupted backup process, even a small packet loss rate may be unacceptable.

Some users may be more tolerant of occasional disruptions in the backup process, while others may require a more reliable backup process. Backups can put a lot of pressure on enterprise networks and are usually run after business hours. If backups need to run after business hours, they need to be done before people get back to the office.

For example, a backup of 1TB of data could start at midnight every day, but if there is packet loss on the line, it would not have time to end before the next business day.

Are you ready to find out what it takes to ensure your precious data is backed up safely in the cloud? Determining acceptable packet loss rates for cloud backups is crucial in ensuring that your data is transmitted reliably and securely. Let's dive in and explore how to determine the acceptable packet loss rate for your cloud backups!

Let’s talk about two different techniques for determining acceptable packet loss rates.

- Network Performance Monitoring: To determine the acceptable packet loss rate for cloud backups, you need to consider the factors that can affect backup performance. One way to do that is by using Obkio’s Network Performance Monitoring tool. Obkio measures packet loss, transfer time, and network throughput during backup operations by sending synthetic UDP traffic every 500ms throughout your network and measuring key performance indicators. This can help identify the level of packet loss that is acceptable for a particular backup size and network connection.

- Performance Test: Another approach to determining an acceptable packet loss rate is to conduct a performance test to determine the maximum acceptable packet loss rate for a specific backup scenario. This can involve running backup operations with varying levels of packet loss and measuring the impact on transfer time and throughput. The acceptable packet loss rate can then be determined based on the results of the performance test.

P.S. In the packet loss test we’re going to show you later, we’re going to use both techniques. We’ll be measuring acceptable packet loss using a performance test with 1GB file size, as well as using Obkio’s Network Performance Monitoring tool.

Packet loss can have a significant impact on the performance of cloud backup applications. When packets are lost, it can result in delays, retransmissions, and in some cases, the loss of data altogether. These issues can lead to slower backup speeds, longer backup times, and potential data loss.

In addition, packet loss can also increase network congestion and cause other applications to suffer from performance degradation, which is why it's essential to monitor and measure packet loss to ensure optimal cloud backup performance and avoid any potential data loss or network disruptions.

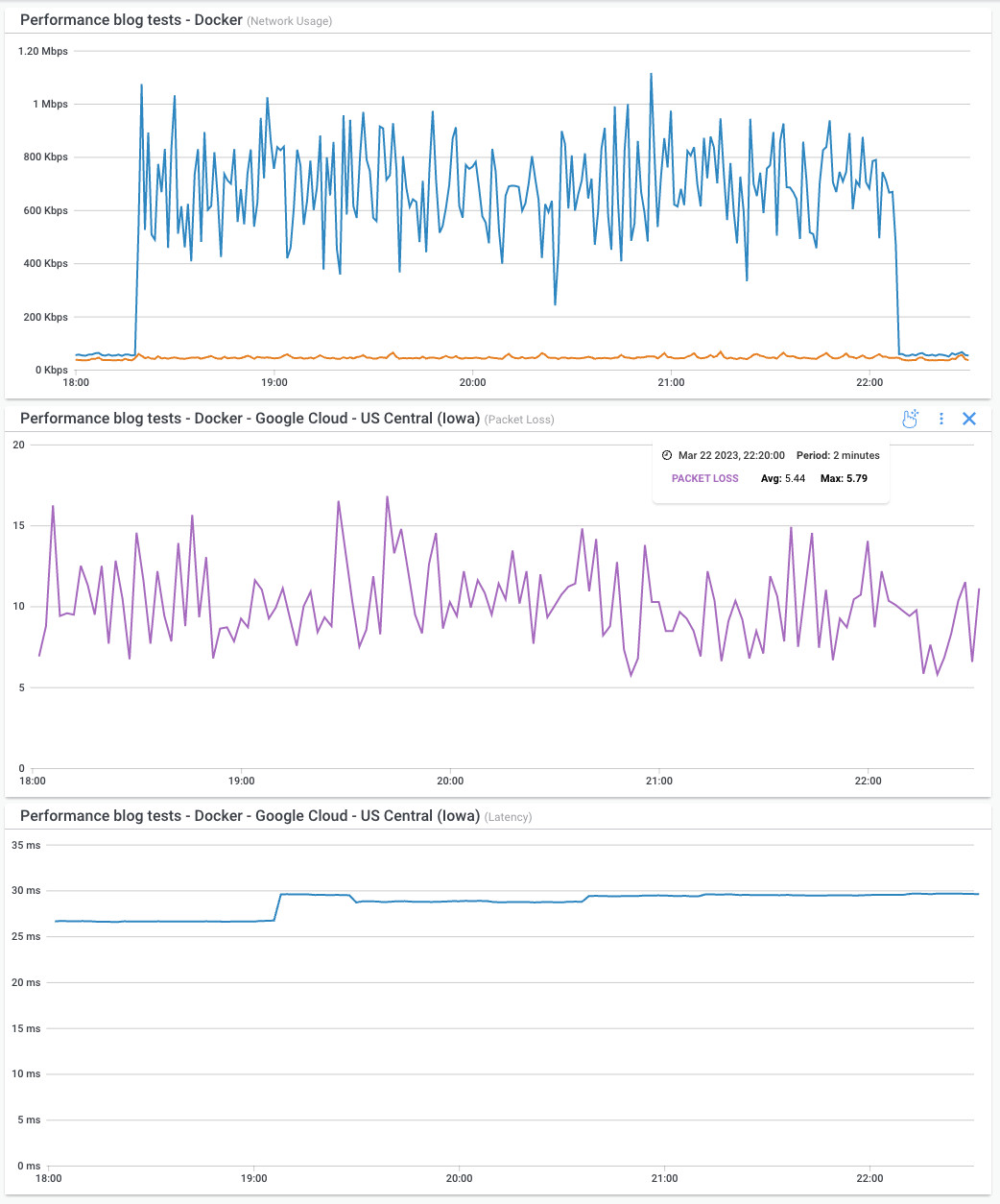

To illustrate the impact of packet loss on cloud backup performance, we conducted a series of performance tests using a Docker container running on an EC2 instance from the AWS Cloud.

The EC2 instance had a 1Gbps Ethernet card, providing a theoretical 1Gbps Internet access. To simulate packet loss, we used Linux's Traffic Control (TC) to introduce packet loss on the inbound traffic of the Ethernet card of the Docker container.

We downloaded a 1GB file openly available on OVH servers via Wget from the container with a high number of retry counters and a 15-second read timeout, instead of the default 15 minutes, to ensure a download that is continuous, even on an unreliable network. Here's an example of the Wget command used to download the file:

wget https://proof.ovh.net/files/1Gb.dat --output-file /tmp/file.tmp --show-progress --tries=2000 --read-timeout=15

We measured the download duration and average throughput on multiple levels of packet loss: 0%, 1%, 3%, 5%, 10%, and 25%. It is worth noting that we used a 1GB file for testing purposes as it would take an extremely long time to download a 1TB file with packet loss. However, for businesses working with graphic designers, video and image processing, or rendering video or gaming content, a 1GB file is insignificant.

Therefore, we also extrapolated the results for a 1TB file using the same packet loss and average throughput parameters. The results of the performance tests for both 1GB and 1TB files are shown in the tables below.

Note: (A) and (B) denote two separate tests with 10% packet loss.

- Test (A) was done with a specific read timeout of 15 seconds

- Test (B) was done with a default read timeout of 15 minutes from Wget.

The read timeout is used to reset the connection when the network socket with the web server is still established, but no data is received, creating a situation where the download isn’t progressing until the connection is reset.

As you can see from the table, even a small level of packet loss (1%) can have a significant impact on throughput, reducing it by more than 375%. At higher levels of packet loss (5% and 10%), the impact on throughput becomes even more significant.

It's important to note that our tests were conducted using a 1GB file, as we knew that testing with a larger file size would be impractical given the level of packet loss we were simulating.

However, for businesses that work with graphic designers, video and audio editors, or perform rendering for video games or movies, a 1GB file is insignificant. Based on our results, we can extrapolate what the impact of packet loss on a larger file size would be, assuming the same packet loss rate and average throughput.

As we can see, even for a larger file size of 1TB, packet loss still has a significant impact on throughput.

- At 1% packet loss, the slowdown factor is only 4.06x

- But at 5% packet loss, the slowdown factor jumps to 36.16x.

- And at 10% packet loss, the slowdown factor is over 100x for both tests (104.60x and 239.66x), meaning the transfer time is over 100 times longer compared to the same transfer with 0% packet loss.

These results demonstrate the importance of minimizing packet loss in data transfers, especially for larger files that are common in industries such as media and entertainment, architecture, and engineering. High packet loss can result in significant delays and increased transfer times, leading to reduced productivity and potential business losses.

Detecting and troubleshooting packet loss is crucial in ensuring acceptable packet loss rates and maintaining a reliable network. In this section, we'll explore some common techniques for detecting and troubleshooting packet loss, as well as strategies for ensuring acceptable packet loss rates.

- Network Performance Monitoring: When packet loss occurs, it is important to identify and address the root cause to prevent further issues. One approach is to use a Network Performance Monitoring tool like Obkio to monitor network performance, identify the source of the packet loss, and troubleshoot the issue.

- FEC & Packet Retransmission: FEC and packet retransmission can also be effective strategies for addressing packet loss, but they should be used as a last resort. Finding the root cause of packet loss and addressing it is the best way to ensure reliable network performance.

- Congestion Control: Congestion control is another effective strategy for addressing packet loss. It involves monitoring and controlling the flow of data in the network to prevent congestion (WAN or LAN congestion) and reduce packet loss. Congestion control can be implemented in several ways, such as Quality of Service (QoS) settings or traffic shaping.

Uncover the Power of Obkio Network Performance Monitoring to Fix Packet Loss Issues & Ensure Acceptable Packet Loss Rates!

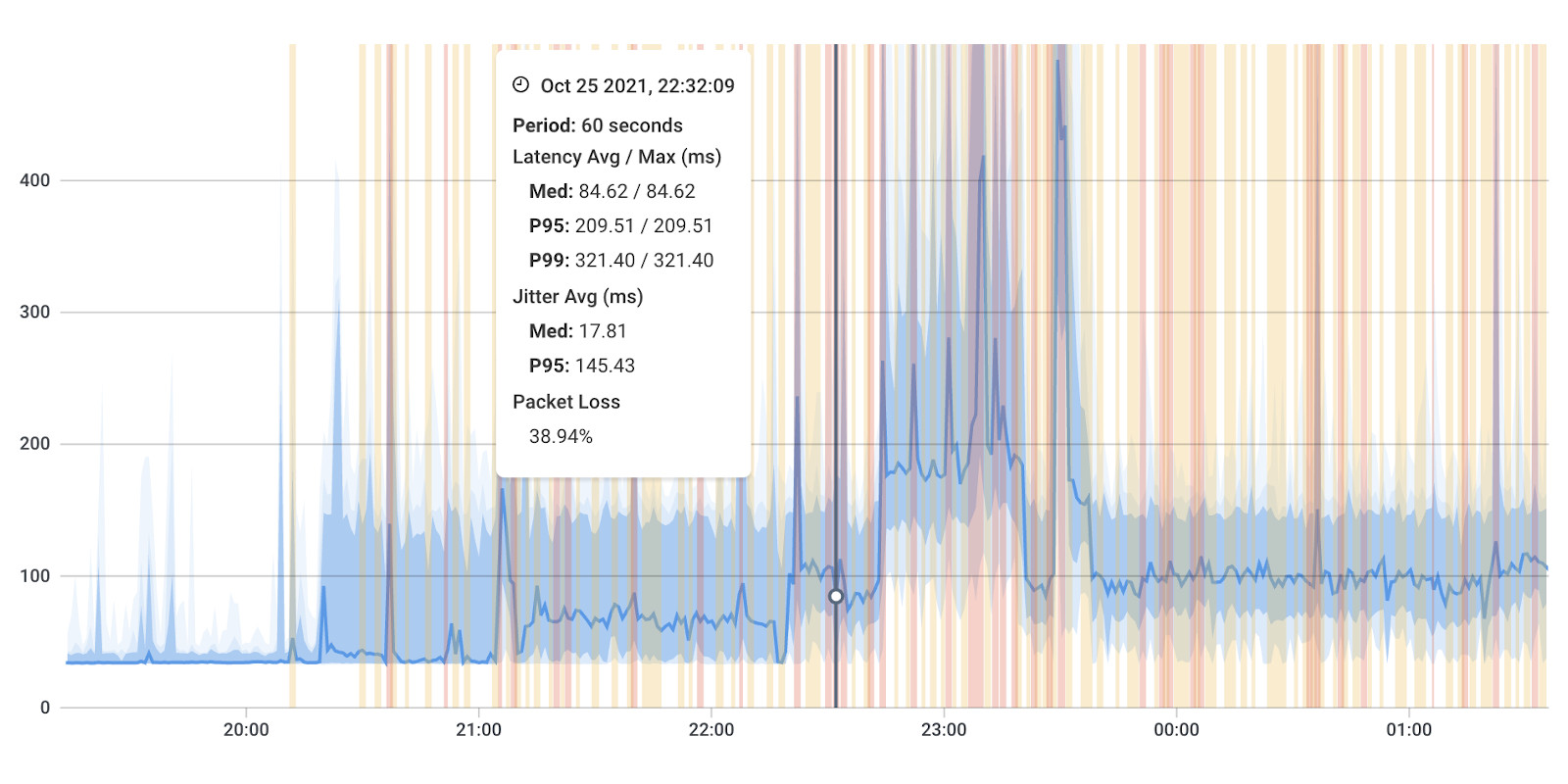

To help monitor packet loss, throughput, and transfer time in cloud backups and other applications, we recommend using Obkio’s Packet Loss Monitoring tool. Obkio provides real-time monitoring of network performance, including packet loss rates, latency, and bandwidth usage.

Obkio’s solution identifies even the earliest signs of packet loss anywhere in your network and then collects the data you need to troubleshoot the packet loss. Get started with Obkio’s free trial now!

- Monitor Packet Loss and Network Performance: Obkio continuously monitors packet loss and uses Monitoring Agents that exchange synthetic traffic and monitor packet loss between any two points in your network.

- Identify Packet Loss in All Network Locations: Obkio measures packet loss using continuous synthetic traffic from Network Monitoring Agents deployed in key network locations like offices, data centers and clouds.

- Proactive Packet Loss Alerts: Once you’ve set up your Monitoring Agents and they’ve started collecting data, you can easily check if any packet loss is happening in your network on Obkio’s Network Response Time Graph. You can even set u automatic network monitoring alerts to be proactively notified of any signs of packet loss.

- Troubleshoot Packet Loss In Your Network: Compare Obkio graphs to identify if packet loss is happening on one network session (towards a specific location on the Internet), or on 2 network sessions (on a network segment that is common to both network sessions) for targeted packet loss troubleshooting.

- Troubleshoot Packet Loss in Network Devices: Use Network Device Monitoring features to understand if the packet loss is happening in your local network, in your network devices. If so, take steps to troubleshoot internally.

- Troubleshoot Packet Loss in Your ISP's Network: Use Obkio Vision: Visual Traceroute tool to identify packet loss happening in your ISP’s network. Share traceroute results with your ISP to open and escalate your support case.

With Obkio - you can say goodbye to packet loss! But, it’s important to keep monitoring packet loss and network performance so you can identify and troubleshoot packet loss issues as soon as they happen. Get started with Obkio’s Free Trial!

Packet loss can have a significant impact on networked applications, especially in cloud backup applications. To ensure reliable backup operations, it is essential to maintain a low or acceptable packet loss rate, which can be determined based on the specific backup scenario and network conditions.

Acceptable packet loss rates can vary depending on the specific application and network conditions. While file transfer, such as cloud backups, is generally more tolerant of packet loss than other applications, there are several applications that are more sensitive to packet loss. Real-time applications such as video streaming and VoIP are more sensitive to packet loss and require low latency and a high level of reliability.

To address packet loss in cloud backups and other applications, several strategies can be used, such as error correction, packet retransmission, and congestion control.

So, the next time you're faced with packet loss, remember that acceptable packet loss rates are essential for a reliable network. Obkio’s Network Performance Monitoring App is the most effective tool for monitoring network performance and identifying any issues that may affect backup operations or other networked applications.

Don't let packet loss slow you down - keep your network running smoothly and enjoy seamless performance in all your favourite applications with Obkio’s Free Trial!

Obkio Blog

Obkio Blog