Table of Contents

Table of Contents

“Would it save you a lot of time if I just gave up and went mad now?” ― Douglas Adams, The Hitchhiker's Guide to the Galaxy

In today's digital world, we rely on fast and reliable internet connections for everything from business operations and communication. However, even the fastest internet connection can be slowed down by network delay, also known as latency.

As a software and SaaS professional, I have developed a strong interest in network latency and its impact on customer experience and business performance. Over the years, I have witnessed firsthand how high latency can cause delays, buffering, and lag in data transmission, resulting in decreased productivity.

In this article, we'll explain what latency is, why it matters, and how to minimize it.

In networking, latency refers to the delay or time it takes for data to travel from one point in a network to another. It is often measured as the time difference between when a device sends a request for data and when it receives the requested data. Latency can be caused by a variety of factors, including the distance between devices, network congestion, and hardware or software limitations.

High latency can result in delays, buffering, and lag in data transmission, which can impact the performance and user experience of various applications, including VoIP calls, video conferencing, and file transfers.

Imagine a company that relies on a web-based inventory management system to keep track of their products. When employees access the system, they need to input information such as product names, quantities, and prices, and the system responds by updating the database and displaying the updated information.

If the network has high latency, there will be a delay between when an employee inputs information and when the system responds. This delay can make the system feel slow and unresponsive, and it can also cause errors if multiple employees are trying to update the same product at the same time.

For example, if an employee enters a product quantity of 50 and then clicks "submit," but the system takes several seconds to respond, another employee might also try to update the same product with a different quantity. If the system processes the second employee's request first, the database will be updated with the wrong quantity, leading to inventory discrepancies and potential loss of revenue.

Therefore, minimizing latency is crucial for the smooth and efficient operation of the company's inventory management system, and any other systems that rely on real-time communication between employees and databases.

Latency is a common issue that can impact the performance of networks and applications, causing delays and frustration for users. To effectively minimize latency and optimize network performance, it's important to understand the factors that contribute to latency.

In this section, we will explore the most common causes of latency, including network congestion, the distance between devices, hardware and software limitations, and examples of latency in different contexts.

By gaining an understanding of what causes latency, we can implement strategies to minimize its impact and deliver a fast, reliable, and seamless network experience.

When there is a lot of traffic on a network, data packets can get delayed as they compete with other packets for bandwidth and cause network congestion

- High traffic times: During peak usage hours, such as during business hours or in the evening when people are streaming video, a network can become congested as more data is transmitted than usual.

- File downloads: Large file downloads, such as software updates or multimedia files, can generate a lot of network traffic and cause congestion, especially if multiple users are downloading files simultaneously.

- Cloud-based applications: As more businesses move their applications to the cloud, accessing these applications can generate significant network traffic and cause congestion.

- Video conferencing: Video conferencing requires a lot of bandwidth and can cause network congestion, especially if multiple people are participating in the same call.

- Distributed denial-of-service (DDoS) attacks: In a DDoS attack, a large number of computers send traffic to a single server, overwhelming it and causing network congestion.

- Internet of Things (IoT) devices: As more IoT devices are connected to a network, they can generate a large amount of traffic, which can cause congestion if the network is not designed to handle it.

In all of these examples, the network becomes congested because there is more data trying to move through it than the network can handle efficiently. This can lead to slower data transfer rates, delays in accessing applications, and other performance issues.

The farther data has to travel between two devices or servers, the longer it takes to arrive, which can increase latency.

Here are some examples of how distance can cause latency for businesses:

- Remote offices: If a business has offices in different locations, accessing data or applications from a remote office can cause latency due to the distance between the offices. For example, if a company's headquarters are in New York and it has a branch office in London, accessing data from the New York office could take longer due to the physical distance between the two locations.

- Cloud-based applications: If a business uses cloud-based applications that are hosted in a different region or country, accessing those applications can cause latency due to the distance between the business and the cloud provider's data center.

- Server location: If a business has servers located in different geographic locations, accessing data or applications from a server that is far away can cause latency due to the physical distance between the user and the server.

- Remote workers: If a business has employees who work remotely from home or other locations, accessing data or applications over the internet can cause latency due to the distance between the employee and the business's network.

In all of these examples, the physical distance between the user and the data or application they are trying to access can cause latency. This is because the data has to travel over a longer distance, which can take more time and cause delays.

To minimize latency in these situations, businesses can use techniques such as content delivery networks (CDNs), which cache content closer to the user, or virtual private networks (VPNs), which can provide a secure connection between remote users and the business's network.

Older or less powerful hardware, such as routers or switches, may struggle to process and transmit data quickly, leading to latency.

Here are some examples of how hardware limitations can cause latency for businesses:

- Outdated routers and switches: Older routers and switches may not have the processing power or memory to handle large amounts of network traffic, leading to latency.

- Low-bandwidth connections: Some types of internet connections, such as dial-up or satellite, have limited bandwidth, which can cause latency as data takes longer to transfer.

- Insufficient RAM: If a device does not have enough RAM, it may struggle to handle multiple applications or processes simultaneously, leading to latency as it tries to switch between tasks.

- Overloaded servers: If a server is handling too many requests or is not configured optimally, it may experience latency as it struggles to keep up with the workload.

- Network interface cards (NICs): If a NIC is not functioning properly or is outdated, it may struggle to process network traffic, leading to latency.

In all of these examples, hardware limitations can cause latency as devices struggle to process or transfer data quickly enough.

To minimize latency in these situations, businesses may need to upgrade their hardware or optimize their network configurations to handle the workload more efficiently.

Bugs or errors in network protocols or applications can cause delays or require additional processing, leading to latency.

Here are some examples of how software issues can cause latency for businesses:

- Operating system issues: If an operating system is not optimized or configured properly, it may struggle to handle multiple applications or processes simultaneously, leading to latency.

- Application bugs: If an application has bugs or errors, it may take longer to process data or respond to user input, leading to latency.

- Network protocol inefficiencies: Some network protocols may not be optimized for efficient data transfer, leading to latency as data takes longer to transfer.

- Security software: If a business uses security software such as firewalls or intrusion detection systems, it may cause latency as data is inspected for potential threats.

- Virtualization issues: If a business uses virtualization software to run multiple operating systems or applications on a single physical server, it may cause latency as resources are shared among the virtual machines.

In all of these examples, software issues can cause latency as applications or processes struggle to handle data efficiently. To minimize latency in these situations, businesses may need to update or optimize their software configurations, or use more efficient protocols or applications.

Depending on the type and quality of the internet connection, ISPs can impose limitations on bandwidth or prioritize certain types of traffic, leading to latency for other types of traffic.

Here are some examples of how Internet Service Provider (ISP) limitations can cause latency for businesses:

- Bandwidth limitations: Some ISPs may impose limits on the amount of bandwidth that a business can use, which can cause latency if the business exceeds its allotted bandwidth.

- Network congestion: If an ISP's network is congested due to high traffic volumes, it can cause latency for businesses that are using the network.

- Quality of Service (QoS) limitations: Some ISPs may use Quality of Service to prioritize certain types of traffic, such as video or voice traffic, over other types of traffic, which can cause latency for businesses that are not prioritized.

- Distance to ISP infrastructure: If a business is located far from an ISP's infrastructure, it may cause latency as data has to travel over a longer distance to reach the business.

- Internet exchange point (IXP) limitations: If an ISP does not have a presence at an IXP, it may have to route traffic over longer distances, which can cause latency for businesses.

In all of these examples, ISP limitations can cause latency as businesses struggle to access data or applications over the internet.

To minimize latency in these situations, businesses may need to negotiate with their ISPs for more bandwidth or higher priority for their traffic, or consider using a different ISP or internet connection that is better suited to their needs.

When a server has to process a large amount of data or handle many requests simultaneously, it can slow down the response time and increase latency.

Here are some examples of how server processing time can cause latency for businesses:

- High server load: If a server is processing a large amount of data or handling many requests simultaneously, it can slow down the network response time and increase latency.

- Insufficient processing power: If a server does not have enough processing power to handle the workload, it may struggle to keep up with requests, leading to latency.

- Poorly optimized applications: If an application is not optimized for efficient data processing, it may take longer to respond to requests, leading to latency.

- Database issues: If a server is accessing a database, database performance issues such as slow queries or inefficient indexing can cause latency as the server waits for the database to respond.

- Third-party services: If a server is relying on third-party services such as APIs or content delivery networks (CDNs), latency issues with those services can cause overall latency for the business.

In all of these examples, server processing time can cause latency as the server struggles to keep up with requests or data processing requirements.

To minimize latency in these situations, businesses may need to optimize their applications and databases, upgrade their server hardware, or use more efficient third-party services.

Physical obstacles or electromagnetic interference can disrupt the transmission of data and cause latency.

It's worth noting that the causes of latency can be complex and multifaceted, and may be influenced by factors such as network architecture, device configuration, and the specific applications or protocols in use.

Measuring latency is a critical aspect of maintaining optimal network performance and user experience.

There are several methods and tools available to measure latency, including ICMP tests like Ping and Traceroute, as well as more advanced network monitoring tools that provide real-time insights into network latency.

In this section, we'll explore the different methods and tools for measuring latency, and how to interpret the results to troubleshoot and address latency issues in your network.

Ping is a command-line tool that can be used to test the connection between two devices or servers. The ping command sends a small packet of data to the remote device or server and measures the time it takes for the packet to be sent and received. The round-trip time, or network RTT, is an indicator of the latency between the two devices.

- Open the command prompt or terminal on your computer.

- Type "ping" followed by the IP address or hostname of the device or server you want to test. For example, "ping www.google.com".

- Press enter to start the ping test.

- The ping command will send packets of data to the remote device or server and measure the time it takes for the packets to be sent and received. It will also report the number of packets sent and received, as well as the percentage of packets lost.

- After the test is complete, the ping command will display the round-trip time (RTT) for each packet, as well as the minimum, maximum, and average RTT.

In this example, the ping test shows that the average RTT between the computer and www.google.com is 10ms.

A lower RTT indicates lower latency, while a higher RTT indicates higher latency. This information can help businesses identify areas where network performance can be improved.

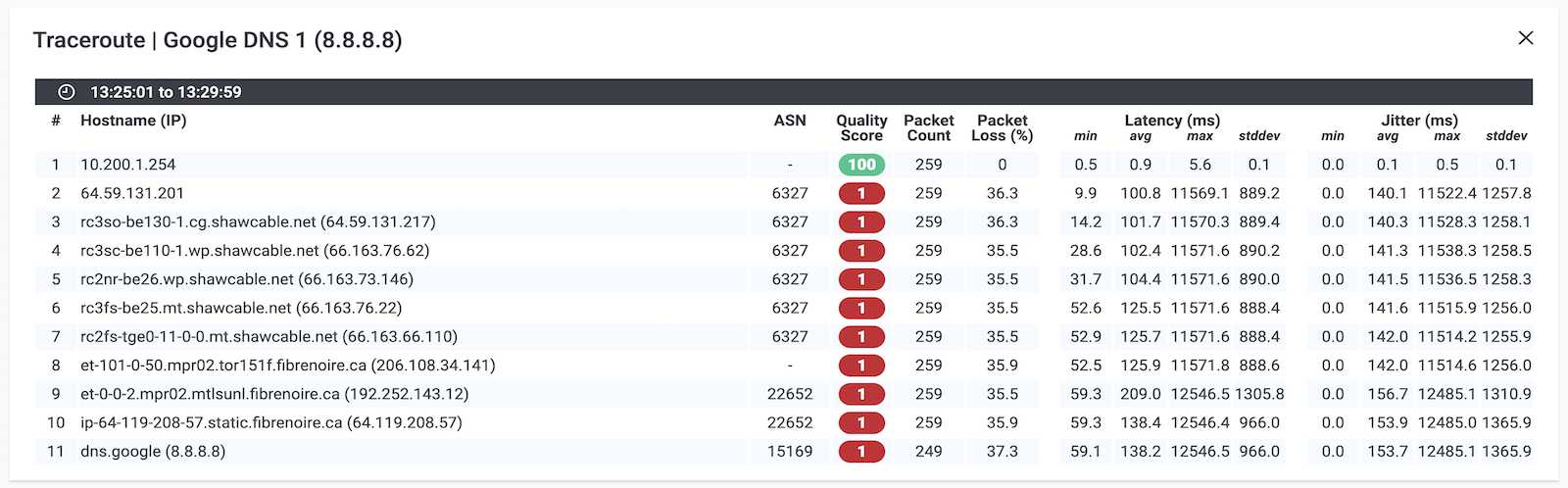

Traceroute is another command-line tool that can be used to identify the path that data takes from one device or server to another.

By measuring the time it takes for data packets to travel between each hop on the path, traceroute can provide a detailed view of the latency between devices.

Here's an example of a traceroute test for latency:

- Open the command prompt or terminal on your computer.

- Type "traceroute" followed by the IP address or hostname of the device or server you want to test. For example, "traceroute www.google.com".

- Press enter to start the traceroute test.

- The traceroute command will send packets of data to the remote device or server and display the time it takes for each packet to travel between each hop on the path.

- After the test is complete, the traceroute command will display the IP addresses of each hop, as well as the time it took for each packet to travel between each hop.

Here's an example output of a traceroute test:

In this example, the traceroute test shows the time it took for each packet to travel between each hop on the path from the computer to www.google.com.

The output shows that the packets traveled through 11 hops before reaching www.google.com, and the average latency was around 11-13ms. This information can help businesses identify areas where network performance can be improved.

- Visual Traceroute Tool: To have a more visual representation of your Traceroute results, you can use Obkio Vision Free Visual Traceroute tool with graphical Traceroutes, Network Maps, and a Quality Matrix.

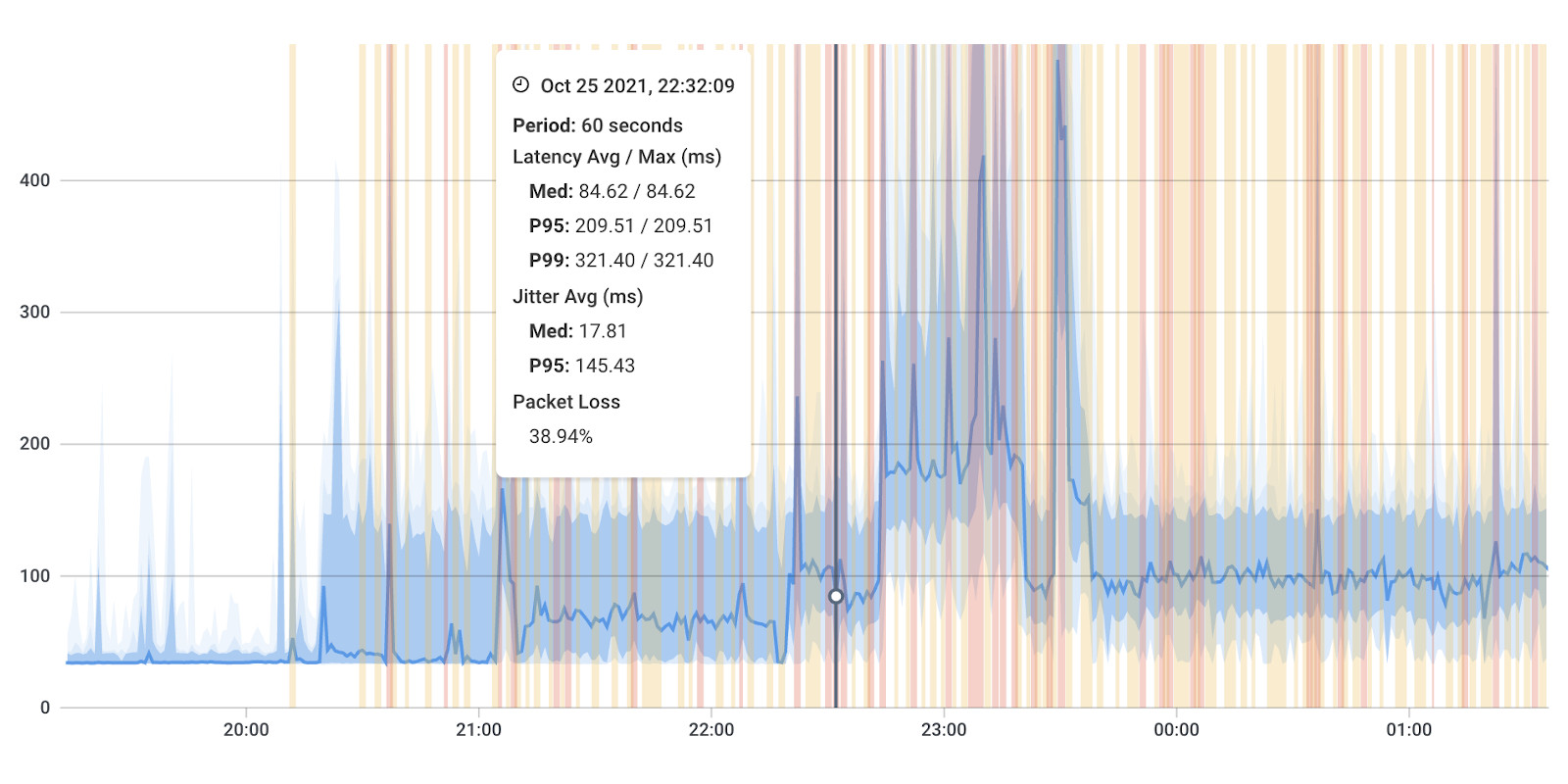

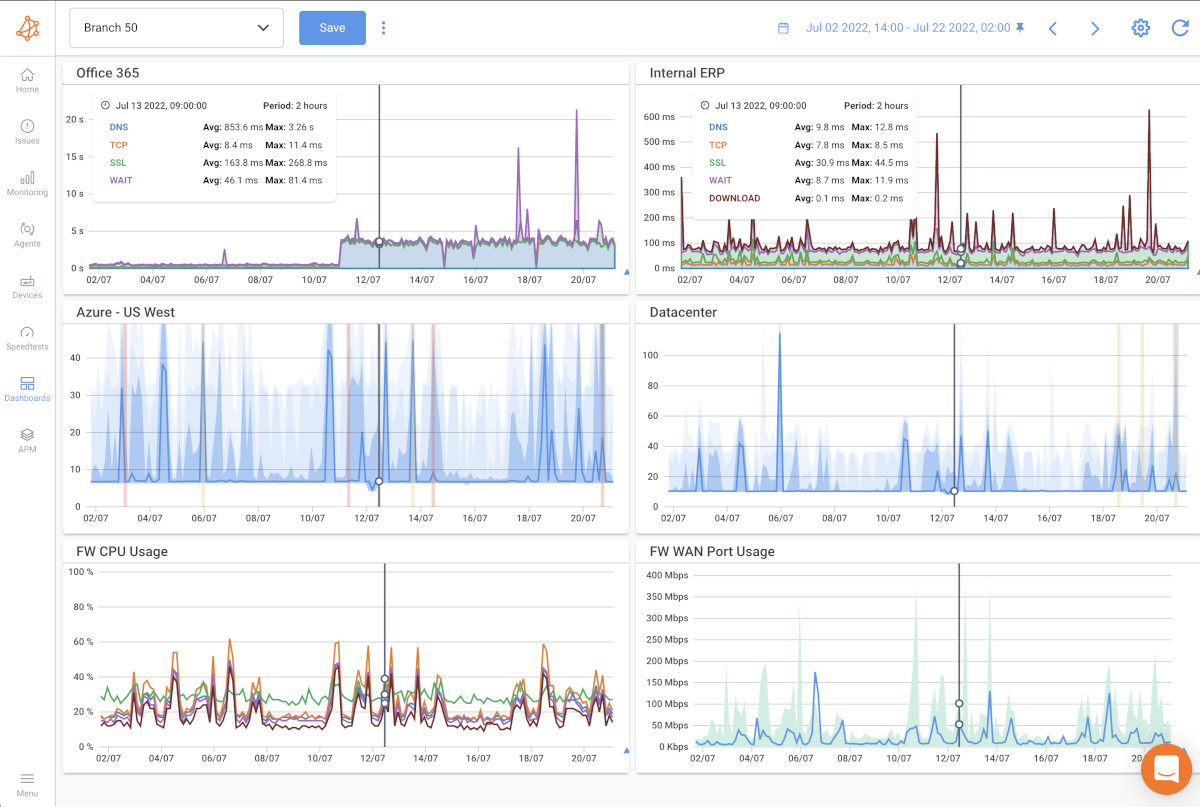

To accurately and automatically measure latency for all your network applications, you can use a tool like Obkio Network Performance Monitoring software.

This tool continuously measures latency by sending synthetic packets every few milliseconds and recording how long it takes for the packets to reach their destination.

How to measure latency with Obkio

- Set up the Obkio monitoring agent on your network devices or servers (using SNMP monitoring).

- Configure the monitoring agent to send synthetic packets every few milliseconds.

- Monitor the latency in real-time on the Obkio dashboard, which displays the average latency, maximum latency, and other network metrics.

Measuring the latency is important for understanding the performance of your network. Low levels of latency are considered acceptable for most applications, but higher levels of latency can cause significant issues with network performance and user experience.

A network monitoring tool, like Obkio, continuously measures latency at the required frequency to ensure it catches the earliest signs of latency issues.

Get started with Obkio’s Free Trial to start measuring the latency of your network applications and identify areas for improvement.

Sign up for Obkio's Free Trial today to proactively monitor and detect latency issues in your network, improve your network performance, and ensure a better user experience.

- 14-day free trial of all premium features

- Deploy in just 10 minutes

- Monitor performance in all key network locations

- Measure real-time network metrics

- Identify and troubleshoot live network problems

When measuring latency, it's important to consider factors such as the location of the devices being tested, the network conditions at the time of network testing, and the specific protocols and applications being used. Businesses may also need to perform testing at different times of the day or week to get an accurate picture of latency over time.

In conclusion, measuring latency is essential for maintaining optimal network performance. Network monitoring tools like Obkio can help businesses measure latency accurately and identify areas of high latency that need attention.

However, high latency can still have a significant impact on network performance, leading to reduced throughput, disruptions to real-time applications (like Zoom), and even reduced reliability. By understanding the impact of latency on network performance, businesses can take proactive steps to minimize its impact and ensure optimal network performance.

In the following section, we will explore the impact of latency on businesses and user experience in more detail.

Measuring latency is crucial for maintaining optimal network performance, and network monitoring tools like Obkio can help businesses identify areas of high latency and take proactive steps to minimize its impact.

However, high latency can still have a significant impact on network performance, affecting throughput, real-time applications, and even reliability. In the following section, we will explore the impact of latency on network performance in more detail.

Here are some of the ways latency can affect network performance:

- Reduced throughput: High latency can reduce the amount of data that can be transmitted over the network, leading to slower overall network performance.

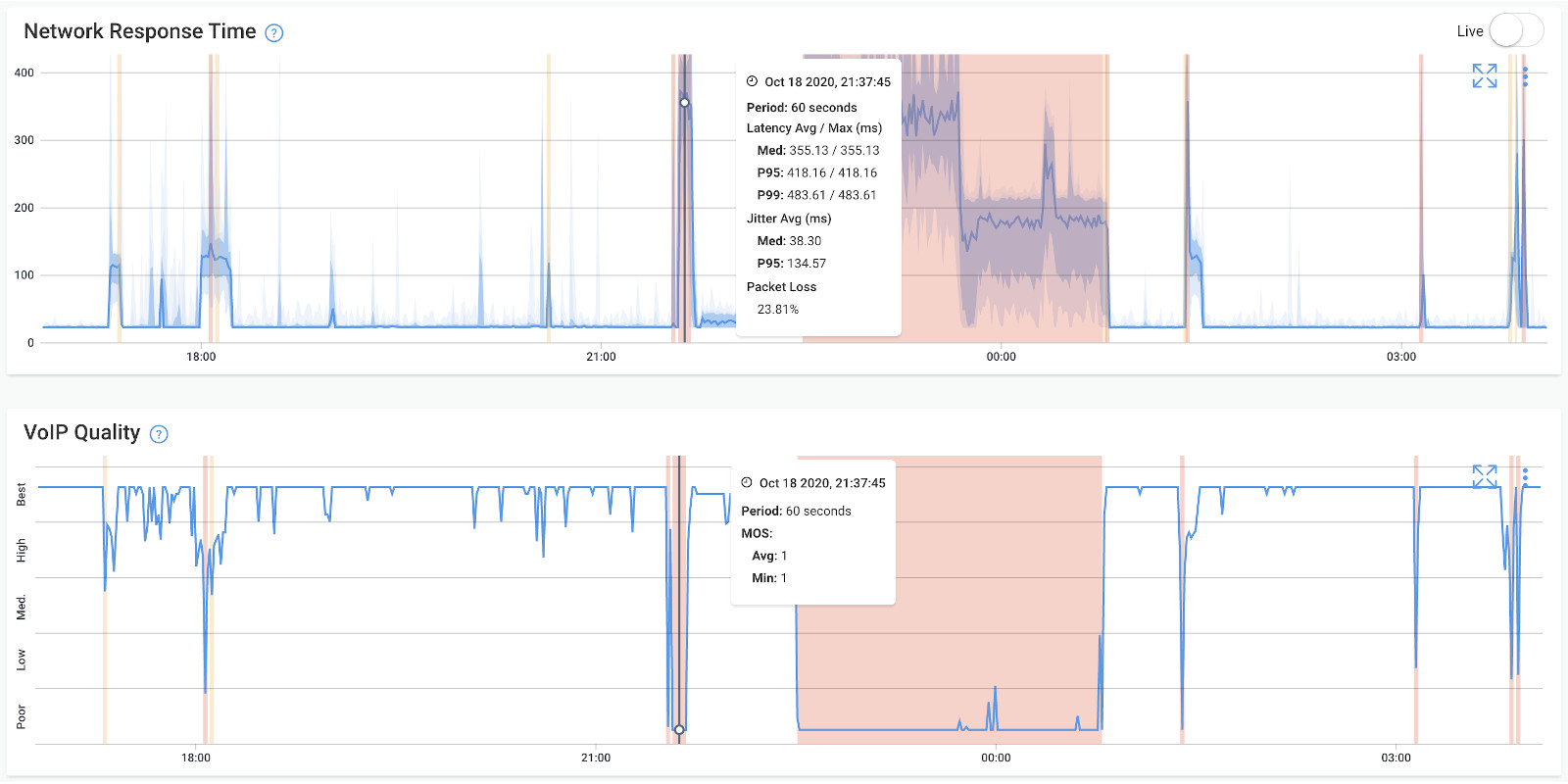

- Disruptions to real-time applications: Real-time applications such as video conferencing or voice-over-IP (VoIP) can be disrupted by latency, leading to choppy or delayed audio or video.

- Increased network congestion: High latency can contribute to network congestion, as data packets take longer to reach their destination, leading to slower overall network performance.

- Poor performance for time-sensitive applications: Applications that rely on time-sensitive data, such as financial transactions or manufacturing processes, can be negatively impacted by latency, leading to errors or delays in processing.

- Reduced reliability: High latency can increase the likelihood of errors or dropped connections, leading to reduced reliability for network applications.

In addition to these impacts, high latency can also lead to frustration among customers and employees, which can have a negative impact on the reputation of the business.

By taking proactive steps to minimize latency, businesses can improve overall network performance, maintain reliability, and increase productivity.

While latency can have a significant impact on network performance, it can also affect businesses and user experience in several ways.

In addition to reduced throughput and disruptions to real-time applications, high latency can also cause frustrations for customers and employees, leading to a poor user experience and even loss of revenue. Let's take a closer look at some of the ways latency can impact businesses and user experience.

Here are some of the ways latency can affect businesses and user experience:

- Reduced productivity: High latency can slow down business processes, making it difficult for employees to complete tasks efficiently and effectively.

- Increased frustration: High latency can lead to frustration among customers and employees, which can have a negative impact on the reputation of the business.

- Disruptions to real-time applications: Real-time applications such as video conferencing or voice-over-IP (VoIP) can be disrupted by latency, leading to choppy or delayed audio or video.

- Poor user experience: Latency can cause slow loading times for web pages, videos, or other content, leading to a poor user experience.

- Loss of revenue: High latency can lead to lost revenue, as customers may become frustrated and abandon their purchases or services.

- Increased costs: High latency can increase costs for businesses, as it may require additional resources to diagnose and fix latency issues.

Overall, latency can have a significant impact on businesses and user experience, leading to reduced productivity, increased frustration, and even loss of revenue.

To minimize the impact of latency, businesses can take proactive steps such as upgrading their network infrastructure, using network monitoring tools, and optimizing their applications and databases.

In the following section, we will explore some of the methods businesses can use to detect and diagnose latency issues in their networks.

Acceptable latency refers to a level of latency that does not significantly impact network performance or user experience. In general, acceptable latency is considered to be less than 100 milliseconds for most applications.

On the other hand, high latency refers to a level of latency that negatively impacts network performance or user experience. The threshold for what is considered high latency can vary depending on the specific application and user expectations. In general, latency over 150 milliseconds is considered high and can lead to delays, disruptions, and poor user experience.

For time-sensitive applications like real-time video or voice communication, even latency over 50 milliseconds can be considered high and can negatively impact the user experience.

Here are two examples of acceptable latency in different scenarios:

For video conferencing and voice over IP (VoIP) applications, acceptable latency is generally considered to be less than 50 milliseconds.

This is because these applications require real-time communication, and any delay or disruption can cause significant issues with communication quality and user experience.

High latency can negatively impact video conferencing and VoIP in several ways. Here are some examples:

- Audio and video quality: High latency can cause audio and video to become out of sync or choppy, leading to a poor user experience.

- Delays in communication: High latency can cause delays in communication, making it difficult for participants to engage in real-time conversation.

- Loss of data: In some cases, high latency can cause data loss, leading to gaps in communication or dropped connections.

- Reduced reliability: High latency can increase the likelihood of errors or dropped connections, leading to reduced reliability for video conferencing and VoIP applications.

In general, businesses should measure latency when VoIP monitoring and aim to maintain latency levels below the threshold for high latency to ensure optimal video conferencing and VoIP performance.

For email, file transfers, and web browsing, acceptable latency is generally considered to be less than 300 milliseconds. These applications do not require real-time communication, so slightly higher levels of latency are acceptable.

However, high latency can still negatively impact these applications in several ways. Here are some examples:

- Slow loading times: High latency can cause slow loading times for web pages or files, leading to a poor user experience.

- Reduced productivity: High latency can slow down file transfers or email communication, reducing productivity for users.

- Incomplete data transfers: In some cases, high latency can cause incomplete data transfers, leading to missing or corrupt files.

- Increased frustration: High latency can cause frustration among users, leading to dissatisfaction and potentially lost business.

In conclusion, understanding the difference between acceptable latency and high latency is crucial for businesses to maintain optimal network performance and user experience.

Businesses should aim to maintain latency levels below the threshold for high latency to ensure optimal network performance and user experience. Regular monitoring and analysis of network latency can help identify areas of high latency and provide insights for addressing them proactively.

Reducing latency can be a complex task that depends on the specific problem and its underlying causes. However, there are several effective methods that businesses can use to reduce latency and improve network performance.

By identifying the root causes of latency and taking proactive steps to address them, businesses can ensure a strong and reliable internet connection that supports optimal productivity and user experience.

Here are some of the most effective ways to reduce latency:

- Upgrade network infrastructure: Upgrading network hardware, such as switches, routers, and cables, can improve network speed and reduce latency.

- Optimize applications and databases: Applications and databases that are optimized for performance can help reduce latency by minimizing data transfer and processing times.

- Use content delivery networks (CDNs): CDNs can help reduce latency for remote users by caching content on servers closer to the user's location, reducing the distance data needs to travel.

- Use edge computing: Edge computing can reduce latency by processing data closer to the source or destination, rather than sending it back and forth across the network.

- Implement Quality of Service (QoS): QoS can prioritize network traffic based on application priority, ensuring that real-time applications like video conferencing and VoIP receive the necessary bandwidth and low latency/good latency for optimal performance.

- Reduce network congestion: Network congestion can increase latency, so businesses should aim to reduce congestion by implementing network traffic management tools or upgrading bandwidth capacity.

- “A towel, The Hitchhiker's Guide to the Galaxy says, is about the most massively useful thing an interstellar hitchhiker can have. Partly it has great practical value. You can wrap it around you for warmth as you bound across the cold moons of Jaglan Beta; you can lie on it on the brilliant marble-sanded beaches of Santraginus V, inhaling the heady sea vapors; you can sleep under it beneath the stars which shine so redly on the desert world of Kakrafoon; use it to sail a miniraft down the slow heavy River Moth; wet it for use in hand-to-hand-combat; wrap it round your head to ward off noxious fumes or avoid the gaze of the Ravenous Bugblatter Beast of Traal (such a mind-boggingly stupid animal, it assumes that if you can't see it, it can't see you); you can wave your towel in emergencies as a distress signal, and of course dry yourself off with it if it still seems to be clean enough.” ― Douglas Adams, The Hitchhiker's Guide to the Galaxy. (I’m pretty sure you can fix Latency with a Towel.)

By taking these steps to reduce latency, businesses can improve network performance, maintain a positive user experience, and increase productivity.

So long, and thanks for all the latency! By now, you should have a good understanding of what latency is, what causes it, and how to reduce it.

As you journey forth into the vast and complex realm of network performance, remember to keep your towel (and network monitoring tools) close at hand.

And if you ever find yourself lost in the interstellar abyss of network latency, don't panic!

Take a deep breath, refer back to this guide, and remember that with the right approach and tools, you can always overcome the challenges of latency and achieve optimal network performance. So long, and happy network monitoring!

Obkio Blog

Obkio Blog